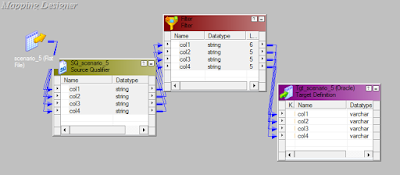

Unlike Direct method, Indirect file method is used when we have multiple files and load them to single target.

To configure Indirect method :-

----------------------------------

- Create a flat file and paste all the path of the flat files in it.

- Drag the one of the source definitions among the multiple flat files and develop code.

- In the session level properties, select the source types as 'indirect option' instead of direct.

- Give the path in source file directory.

- Give Common file name in source filename field.

To configure Indirect method :-

----------------------------------

- Create a flat file and paste all the path of the flat files in it.

- Drag the one of the source definitions among the multiple flat files and develop code.

- In the session level properties, select the source types as 'indirect option' instead of direct.

- Give the path in source file directory.

- Give Common file name in source filename field.