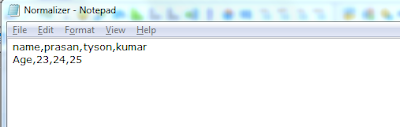

Normalizer Transformation is Active and Connected transformation. It converts single row data into multiple columns data. It converts de-normalized table into a normalized table. You cannot drag & drop columns to normalizer transformation like the rest of the transformations.

* Normalizer is used to convert rows into columns.

* Normalizer is used in the place of source qualifier while reading mainframe or Cobal Source.

* Normalizer is used in the place of source qualifier while reading mainframe or Cobal Source.

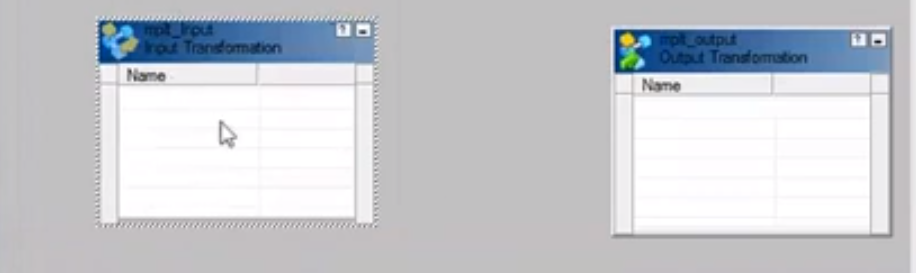

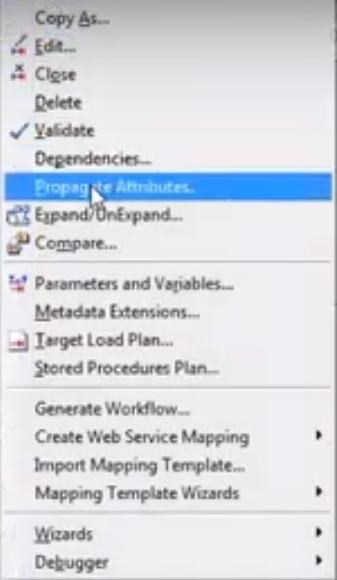

Steps to create Normalizer transformation :-

-----------------------------------------------------------------

- Go to tranformations tab and select normalizer

- Double click normalizer and select the normalizer tab

- Add the occurs based on the requirement

In Normalizer properties tab 'Reset' and 'Restart' are the two options available.

Reset is used to reset the Gk value to the value, that is used before the session.

Restart is used to start Gk sequence from 1 and restart for each session

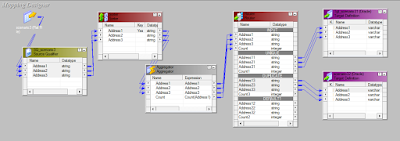

There 2 important ports available and they are GK (Generated Key) and GCID (Generated Column ID)

GK generates sequence number starting from the value defined in the sequence field.

GCID hold the value of the occurrence field.

Normalizer generates seperate rows based on the occurances we put in the transformation.

GK is used to identify whether the records belongs to the same original record and allocates key or values like 1 or 2.

GCID is used to give column id's or values to the generated columns or occurances like 1,2,3.